Real-time VAD using 300-3400 Hz FFT to trigger scripts on speech start/stop, with sensitivity tuning, live audio level graph, and TTS pause.

How to add the Voice Activity Detection robot skill

- Load the most recent release of ARC (Get ARC).

- Press the Project tab from the top menu bar in ARC.

- Press Add Robot Skill from the button ribbon bar in ARC.

- Choose the Audio category tab.

- Press the Voice Activity Detection icon to add the robot skill to your project.

Don't have a robot yet?

Follow the Getting Started Guide to build a robot and use the Voice Activity Detection robot skill.

How to use the Voice Activity Detection robot skill

The Voice Activity Detection (VAD) skill listens to your microphone in real time and fires scripts when speech begins or ends. It uses FFT (Fast Fourier Transform) analysis on the 300-3400 Hz speech band to distinguish voice from background noise, filtering out short impulsive sounds like knocks and clicks.

Typical use: trigger a speech recognition skill when someone starts talking, and stop it when they go silent.

Key Features:

- Speech Detection:

- Detects when speech starts (

Speech Begin) and stops (Speech End).

- Detects when speech starts (

- Customizable Actions:

- Allows users to attach custom scripts that execute automatically when speech starts or stops. For example, you can trigger robot movements, lights, or other interactions based on speech activity.

- Real-Time Audio Visualization:

- Displays a live graph of the detected speech level, giving a visual representation of the audio activity.

- Adjustable Sensitivity:

- Includes settings to fine-tune detection parameters, such as silence thresholds, for optimal performance in various environments.

Practical Applications:

- Interactive Robots:

- Enable your robot to react to speech dynamically, such as greeting people when they start talking or pausing when they stop.

- Hands-Free Control:

- Use speech detection to trigger actions without needing additional input devices.

- Speech Analysis:

- Visualize audio levels for debugging or fine-tuning robot behavior in different environments.

This robot skill combines robust speech detection with seamless integration into Synthiam ARC, empowering your robot to understand better and respond to its surroundings!

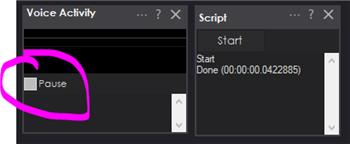

Main Window

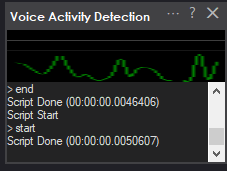

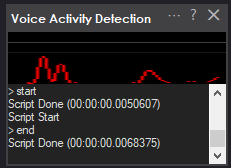

Voice Detected The graph will display green when the voice is detected, and the voice start script will be executed.

Voice Not Detected The display will be red when a human voice is absent, and the voice end script will execute.

Pause You can pause the detection of speech by pressing the pause checkbox on the main form. The PAUSE is also enabled when any of the Audio.say() scripting commands are used and unpaused when the speaking has been completed. This ensures the VAD does not detect the robot speaking and triggers a false positive.

Graph

A real-time level meter shows the detected audio energy relative to the sensitivity threshold:

| Color | Meaning |

|---|---|

| Red | Listening - no speech currently detected |

| Green | Speech is active |

| Yellow | Detection is paused |

The graph bar fills from 0-100% as energy approaches and exceeds the threshold.

Pause Checkbox

Checking Pause suspends all voice detection. The graph stops updating and no scripts will fire. Uncheck to resume.

The plugin automatically pauses while the robot's text-to-speech (TTS) is speaking, then restores your previous pause state when TTS finishes. This prevents the robot's own voice from triggering detection.

Log Tab

Displays diagnostic output. Every ~50 audio frames (~2.5 seconds) a line is written:

[VAD] energy=0.000042 threshold=0.000050 ratio=0.840

Use these values to calibrate Sensitivity - the energy and threshold should be close together for good triggering.

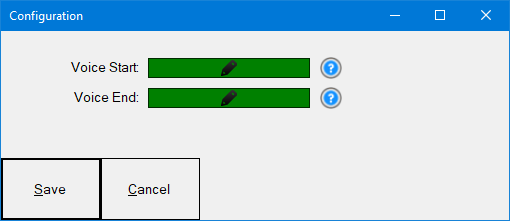

Configuration

Open configuration by clicking the gear/config button on the skill panel.

Sensitivity

Default: 0.00005

The FFT energy threshold for speech detection. The VAD compares the smoothed energy of the 300-3400 Hz band against this value.

- Lower value more sensitive (triggers on quieter sounds)

- Higher value less sensitive (requires louder speech to trigger)

Use the diagnostic log to tune this. If energy is consistently below threshold when speaking, lower the value. If background noise is triggering false detections, raise it.

Silence Duration

Default: 500 ms

How long (in milliseconds) silence must persist after speech before the Speech End event fires. Increase this if the script fires too early during natural pauses in speech. Decrease it for faster response after someone stops talking.

Minimum Speech Duration

Default: 150 ms

How long (in milliseconds) energy must continuously exceed the threshold before Speech Begin fires. This filters out short impulsive sounds (clicks, knocks, coughs) that are not sustained speech. Increase this to be more aggressive about ignoring non-speech sounds.

Scripts

Both scripts are configured inside the Config dialog.

Speech Start Script

Runs once when speech begins (after energy exceeds Sensitivity for at least Minimum Speech Duration milliseconds).

Typical use:

// Start a speech recognition skill

ControlCommand("Speech Recognition", "Start Listening");

Speech End Script

Runs once when silence has persisted for the Silence Duration after speech was active.

Typical use:

// Stop the speech recognition skill

ControlCommand("Speech Recognition", "Stop Listening");

How Detection Works

- Microphone audio is captured at 16 kHz, 16-bit mono, in 50 ms chunks.

- Each chunk is optionally filtered with a high-pass BiQuad filter (cutoff 300 Hz) to remove low-frequency rumble.

- Samples accumulate into a 1024-sample FFT buffer. When full, a Hamming window is applied and the FFT is computed.

- The average magnitude of all FFT bins in the 300-3400 Hz speech band is calculated.

- That energy value is exponentially smoothed (factor 0.3) to reduce frame-to-frame jitter.

- The smoothed energy is compared to Sensitivity:

- If above threshold for Minimum Speech Duration

SpeechBeginfires. - If below threshold for Silence Duration after speech was active

SpeechEndfires.

- If above threshold for Minimum Speech Duration

Tuning Guide

Robot is not triggering on speech

- Check the diagnostic log. Note the

energyvalue while speaking. - Lower Sensitivity until it is slightly below the

energyvalue when speaking. - If still not triggering, ensure the correct microphone is selected in Windows Sound settings.

Too many false triggers (background noise)

- Note the

energyvalue during silence in the diagnostic log. - Raise Sensitivity to just above that noise floor value.

- Increase Minimum Speech Duration (e.g., 200-300 ms) to require more sustained sound.

Speech End fires too quickly (mid-sentence pauses)

Increase Silence Duration (e.g., 800-1500 ms).

Speech End fires too slowly after speaking stops

Decrease Silence Duration (e.g., 300 ms).

Robot triggers on its own voice

This is handled automatically - detection pauses when TTS is active. If issues persist, verify that the TTS events are wired correctly (the plugin uses EZBManager.PrimaryEZB.SpeechSynth).

Technical Specifications

| Property | Value |

|---|---|

| Sample rate | 16,000 Hz |

| Bit depth | 16-bit mono |

| Buffer interval | 50 ms |

| FFT size | 1024 samples |

| Speech band | 300-3400 Hz |

| Window function | Hamming |

| Pre-filter | High-pass at 300 Hz, Q=0.707 |

| Energy smoothing | Exponential, =0.3 |

Control Commands for the Voice Activity Detection robot skill

There are Control Commands available for this robot skill which allows the skill to be controlled programmatically from scripts or other robot skills. These commands enable you to automate actions, respond to sensor inputs, and integrate the robot skill with other systems or custom interfaces. If you're new to the concept of Control Commands, we have a comprehensive manual available here that explains how to use them, provides examples to get you started and make the most of this powerful feature.

Control Command ManualControl Commands

Other skills or scripts can control VAD behavior using ControlCommand().

| Command | Effect |

|---|---|

Pause |

Suspends detection (same as checking the Pause checkbox) |

Resume |

Re-enables detection (same as unchecking Pause) |

Example from another skill's script:

// Pause VAD while playing audio

ControlCommand("Voice Activity Detection", "Pause");

// ... do something ...

// Re-enable VAD

ControlCommand("Voice Activity Detection", "Resume");

This one seems interesting. I wonder if my TV or radio would set it off all the time

I can see great uses for this, particularly in interactive robots. When it hears a voice it could turn on face detection and start looking around until it sees a face. (would be super cool if it could support multiple microphones and compare the levels so it knows what direction to start looking. might not even need face detection for that, but I don't think Windows even deals well with having multiple microphones on at the same time, so probably beyond the scope of this project...).

Alan

You could combine this skill with the Kinect 369 depth skill. It returns a variable with the angle of audio.

I should add the reason our client asked for this skill is to have fluent conversational dialog with their robots. Not sure what speech recognition they’re using. But I do know they’re using google dialog flow for nlp

Hmm. Too bad your results with the Kinect for navigation haven't been as promising as Realsense. I don't think I need the ability badly enough for the expense of both (or a Kinect and a Lidar). I'll keep it in mind though. I think I recall seeing something on one of hte robot part's sites about a sound direction finder. If I come across it again I'll see whether it is something that is inexpensive and a skill could be written for.

Fixed a bug with an error when closing the skill

I would love to see this have an added feature sometime that allows it to check output audio instead of input audio. For talking robots, the ServoTalk skills will estimate how long a jaw should move based on a text string's length and content. They tend not to be very accurate and often underestimate or overestimate the time it takes for the Text-to-speech app to run. I thought this skill could help circumvent this limitation by keeping an ear open for when the audio starts and ends. I also thought of adding some natural neck animation while my robot speaks. The problem is that I have no way to trigger my 'stay alive' script when the audio begins and stop it when text to speech ends. This skill almost got me there, then I realized it worked only with the mic input. A check box that switches what it listens too from MIC to LINE OUT would be super cool!

v7 has been updated with some additional fine-tuning for more accurate detection and completion of speech.