T265 VSLAM for ARC: SLAM-based mapping and precise way-point navigation, low-power tracking, and NMS telemetry.

How to add the Intel Realsense T265 robot skill

- Load the most recent release of ARC (Get ARC).

- Press the Project tab from the top menu bar in ARC.

- Press Add Robot Skill from the button ribbon bar in ARC.

- Choose the Navigation category tab.

- Press the Intel Realsense T265 icon to add the robot skill to your project.

Don't have a robot yet?

Follow the Getting Started Guide to build a robot and use the Intel Realsense T265 robot skill.

How to use the Intel Realsense T265 robot skill

With its small form factor and low power consumption, the Intel RealSense Tracking Camera T265 has been designed to give you the tracking performance for your robot. This ARC user-friendly robot skill provides an easy way to use the T265 for way-point navigation.

The T265 combined with this robot skill provides your robot a SLAM, or Simultaneous Localization and Mapping solution. It allows your robot to construct a map of an unknown environment while simultaneously keeping track of its own location within that environment. Before the days of GPS, sailors would navigate by the stars, using their movements and positions to successfully find their way across oceans. VSLAM uses a combination of cameras and Inertial Measurement Units (IMU) to navigate in a similar way, using visual features in the environment to track its way around unknown spaces with accuracy. All of these complicated features are taken care of for you in this ARC robot skill.

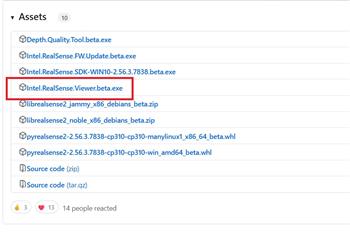

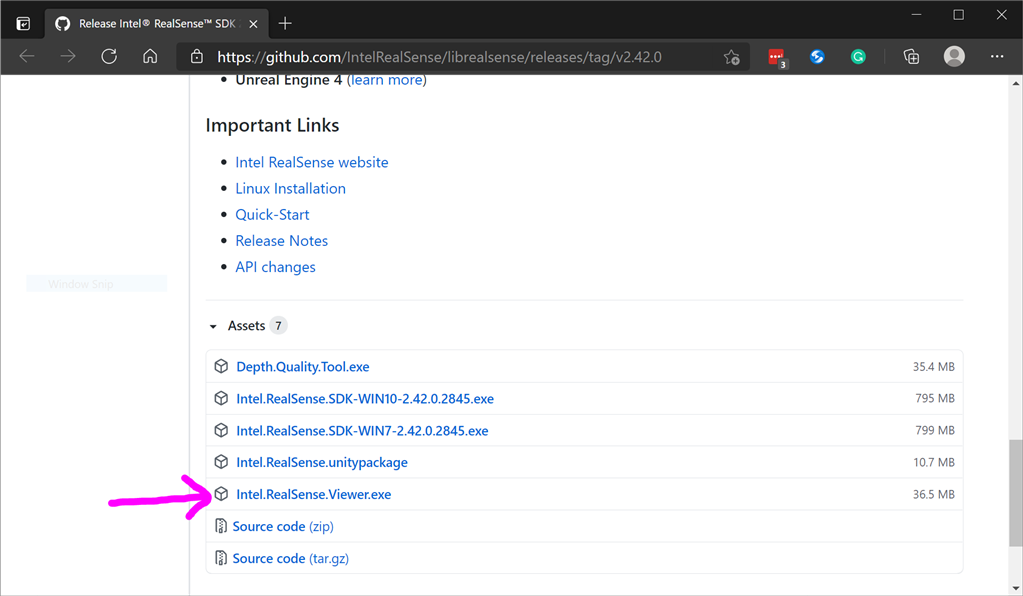

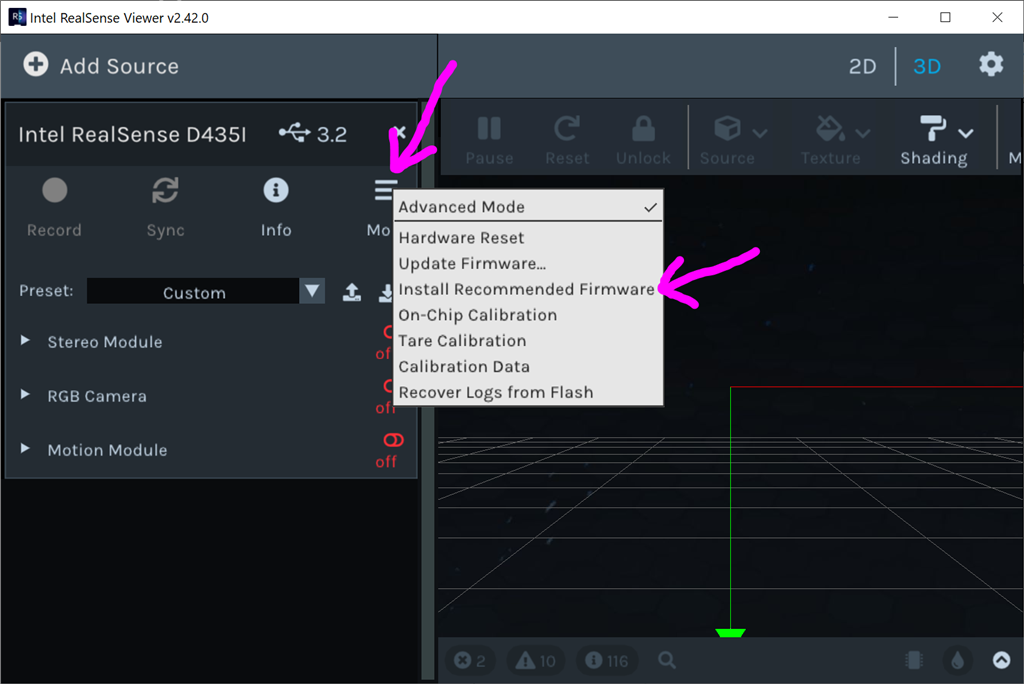

Update Firmware The device sensor may require a firmware update.

Visit the Realsense GitHub page, scroll to the bottom of the page, and install the Intel.Realsense.Viewer.exe from here: https://github.com/IntelRealSense/librealsense/releases/latest

Click the hamburger settings icon and select Install Recommended Firmware

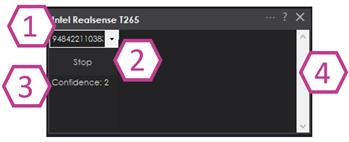

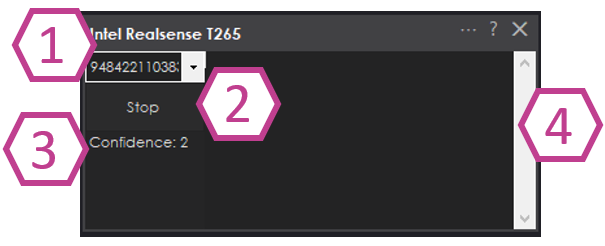

Robot Skill Window The skill has a very minimal interface because it pushes data in the NMS and is generally used by other robot skills (such as The Navigator).

Drop-down to select Realsense device by the serial number. This is useful if there are multiple devices on one PC.

START/STOP the Intel T265 connection.

The confidence of the tracking status between 0 (low) and 3 (highest). In a brightly lit room with many points of interest (not just white walls), the tracking status will be high. Tracking will be low if the room does not have enough light and/or detail for the sensor to track.

Log text display for errors and statuses.

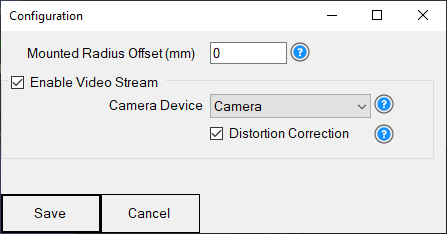

Config Menu

1) Mounted Radius Offset (mm) is the distance in mm of the T265 from the center of the robot. A negative number is toward the front of the robot, and a positive number is toward the rear. The sensor must be facing 0 degrees toward the front of the robot. The sensor must not be offset to the left or right of the robot.

Enable Video Stream will send the fisheye b&w video from the T265 to the selected camera device. The selected camera device robot skill must have Custom specified as the input device. Also, the camera device will need to be started to view the video.

Distortion Correction will use a real-time algorithm to correct the fisheye lens, which isn't always needed and is very CPU intensive.

Video Demonstration Here's a video of the Intel RealSense T265 feeding The Navigator skill for way-point navigation

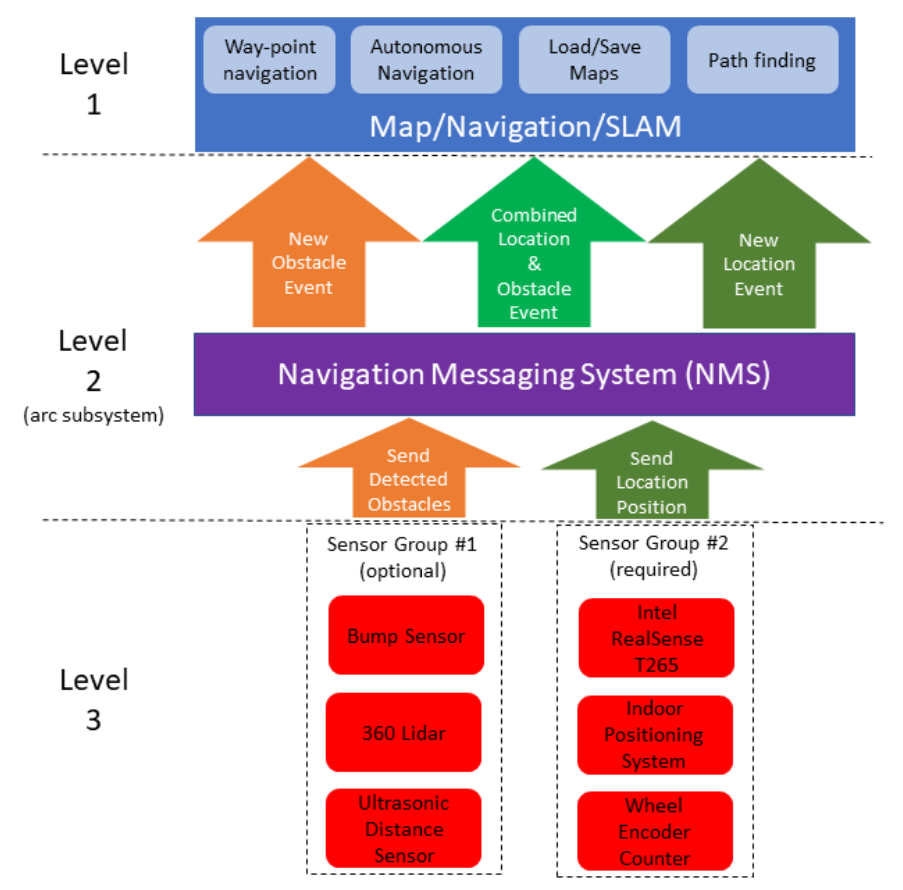

ARC Navigation Messaging System This skill is part of the ARC navigation messaging system. It is encouraged to read more about the messaging system to understand available skills HERE. This skill is in level #3 group #2 in the diagram below. This skill contributes telemetry positioning to the cartesian positioning channel of the NMS. Combining this skill with Level #3 Group #1 skills for obstacle avoidance. And for Level #1, The Navigator works well.

Environments This T265 will work both indoors and outdoors. However, bright direct light (sunlight) and darkness will affect performance. Much like how our eyes see, the camera will is also susceptible to glare and lack of resolution in the dark. Because the camera visual data is combined with the IMU, the camera must have reliable visible light. Without the camera being able to detect the environment, the algorithm will be biased to use the IMU and will experience drift, which greatly affects the performance of the sensor's accuracy.

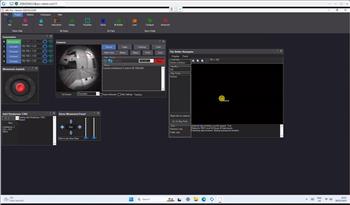

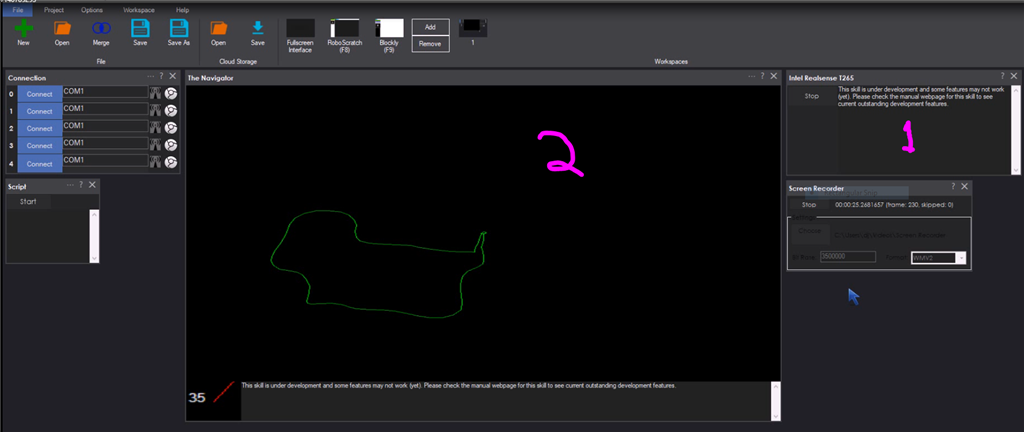

Screenshot Here is a screenshot of this skill combined with The Navigator in ARC while navigating through a room between two way points.

Starting Location The T265 does not include a GPS/Compass or any ability to recognize where it is when initialized. This means your robot will have to initialize from a known location and direction to reuse saved maps. Make sure you mark the spot on the ground with masking tape where the robot starts from.

How To Use This

Connect your Intel RealSense T265 camera to the computers USB port

Load ARC (version must be >= 2020.12.05.00)

Add this skill to your project

Now we'll need a Navigation skill. Add The Navigator to your project

Press START on the Intel RealSense skill and data will begin mapping your robot's position

How Does It Work? Well, magic! Actually, the camera is quite interesting and it breaks the world down into a point cloud of features. It remembers the visual features so it can re-align itself on the internal map. It uses a VPU, is what Intel calls it. Here's a video of what the camera sees.

Related Hack Events

A Little Of This, A Little Of That

DJ's K8 Intel Realsense & Navigation

Related Questions

T265 Realsence Viewer.Exe On An Upboard

Navigator With Mecanum (Omni) Wheel Robot Platforms

Questions Regarding Navigation

In The Better Navigator No Movement From The T265

Upgrade to ARC Pro

Stay at the forefront of robot programming innovation with ARC Pro, ensuring your robot is always equipped with the latest advancements.

Hardware Info

Hardware Info

Hi DJ, i moved the T265 camera position to the edge of the roomba and when i spin 360deg in place i get a circle like on the pic. Could you make an offset for the camera as you did with the US sensors?

It would be interesting to see how accurate the orientation data is in order to do this. Playing with the sensor it does have orientation yaw pitch and roll. xy and z access tracking also would be nice for drone track and robot arms. I guess you could always mount the sensor in centre of roomba on your pole and reduce offset.

I would love if we had D435 support. Watch this video.

long term goal would be Map room with T265 and D435. Use object recognition to identify and find the object. Use T265 data to go to the object and use data from D435 to calculate exact location and orientation of object, now use inverse kinematics to calculate how to pick it up and run a bunch of simulations, finally use robot arm and gripper to pick up object. Mounting the T265 on robot arm would verify are calculations as we pick up object and also be used to train robot using ML to improve IK calculations.

So GPU TPU support (Nvidia Jetson?) for accurate object recognition and IK calculations. D435i support for 3D point cloud and T265 for location and orientation and movement of robot arm in 3D space.

@Nink I had the cam on the pole but it creates some vibration and having an offset parameter lets you place the camera where you want. How are you using your T265? The Jetson is ARM based, so not ARC compatible.

Well it could run on Linux and arm, it used to :-). But I get DJ doesn’t want to support 2 distros as there is a lot of effort involved amd that = costs, but that doesn’t prevent someone adding Jetson support as an accessory and offload all the GPU requirements to the Nano.

right now just playing with T265 (not enough hours in day) but my goal is a robot that can do some simple tasks around the house. Pick up shoes put them away, vacuum without smashing the wife’s furniture up or getting stuck under the coffee table and most important. GET ME A BEER.

what are your plans @proteusy?

I am currently working on the "Go to work for me" script.:p

Updated for radius offset in MM from the center of the robot. Read the manual above for more detail or use the question mark in the config menu.

What is the performance and power consumption like on the stick computer @proteusy, are you able to Remote Desktop in ok? Since we are only getting telemetry data of T265 I am wondering if it would be better to just pull the data off the T265 with a pi or stick pc and send it to Navigator on a remote desktop. I mounted a NUC with 2 * 3 cell Lipo's in series for ~22v to run everything off, works fine but battery life is short.

For now i use the roombas battery only for the roomba and have 4 x 3.7V 3500mAh MR18650 cells for the rest. The intel stick is surprisingly fast and the nominal consumption is around 1.2 amp (just the stick). All together i get around 2 hours of play. My next step is to buy a li-ion with 5500mAh for the roomba and run all from there. I am curranty working on the obstacle avoidance when using The Navigators way points.