Run custom scripts when speech starts/ends to sync servos and LEDs to spoken $SpeechTxt, with loop support, stop button and logs.

How to add the Speech Script robot skill

- Load the most recent release of ARC (Get ARC).

- Press the Project tab from the top menu bar in ARC.

- Press Add Robot Skill from the button ribbon bar in ARC.

- Choose the Audio category tab.

- Press the Speech Script icon to add the robot skill to your project.

Don't have a robot yet?

Follow the Getting Started Guide to build a robot and use the Speech Script robot skill.

How to use the Speech Script robot skill

Execute a script when speech is created. With this skill, you can create a function that will move servos or LEDs based on spoken speech. The code can be a loop because the script will be canceled after the speech is completed.

Variable The variable containing the speech that is currently speaking is set as $SpeechTxt.

*Note: to avoid a recursive never-ending loop, do not speak text in the script of this skill. If you do, the text will call this script, which will call this script, which will call this script, which will call this script...

Main Screen

Stop Script Button A button on the main screen can be pushed to stop the currently running script. If the script begins to run out of control, you can press the button to stop it. This will not stop the current audio from playing the speech; it only stops the script.

Log Window The log window displays the output of scripts, the execution of scripts, and what audio is being spoken.

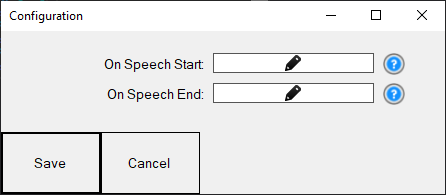

Configuration Window

There are two scripts that can be configured in the configuration window of this robot skill. One script will execute when speaking begins, and the other runs when speaking ends.Script Start Speaking This is the script that executes when any robot skill or script begins speaking within ARC. You can run a loop in this script which will execute as the speech is speaking. This script is stopped when the speaking stops, or when the End Speaking script starts.

Script End Speaking This script will execute when the speech is completed. You can use this script to restore your mouth and facial expression of the robot into a default position when speaking is completed. This script stops the Start Speaking script when it is executed.

Examples

Example Script (Talk Servo) This example script will move a servo on port D0 as a jaw. Position 10 is the closed mouth, and 100 is an open mouth. There is a delay set for vowels, constants, and the end of the sentence. These would be tweaked based on the speed of speaking.

var words = getVar("$SpeechTxt").split(" ");

for (var wi = 0; wi < words.length; wi++)

for (var i = 0; i < words[wi].length; i++) {

var c = words[wi][i];

switch (c) {

case 'a':

case 'e':

case 'i':

case 'o':

case 'u':

case 'y':

// open mouth vowels

Servo.setPosition(d0, 100);

sleep(100);

break;

case '.':

case ',':

case '?':

case '!':

// close mouth for end of sentence

Servo.setPosition(d0, 10);

sleep(500);

break;

default:

// close mouth for constants

Servo.setPosition(d0, 10);

sleep(100);

break;

}

}

Of course, I'm writing about the 'Speech Script' skill. It's logical because the topic is designed for discussing 'Speech Script.'

In the 'Talk servo v2' skill, I also wrote about the issues it has.

Let me highlight the advantages of 'Speech Script':

It understands all letters and punctuation marks encountered on computers in different countries with different languages. Essentially, it's entirely multilingual.

Multiple servos can be added, each with its own adjustment (eyes, eyelids, and a movable head). It operates more accurately with languages other than English.

Disadvantages of 'Talk servo v2':

It's not multilingual and can only use English speech (letters from the English alphabet).

Only one servo can be added to one skill.

It's impossible to specify exactly which characters to react to. This might be important if the robot also has eyes, eyelids, and a movable head.

Overall, the problem with the entire system is that it's configured only for the English language. If it operates multilingually, it does so only randomly. Since I live in a country of migrants and foreigners, by default, all my projects should be multilingual. They should start by identifying the language and supporting conversation in the interlocutor's language. Otherwise, why would I engage in voice communication if it only works beyond Europe and Asia?

The Speech Script skill indeed offers flexibility and the potential for multilingual support, as it allows you to write custom scripts that can handle various languages and characters. The ability to control multiple servos with individual adjustments is also a significant advantage for creating more complex and nuanced movements.

Given the advantages you've highlighted for the Speech Script skill and the limitations of the Talk servo v2 skill for your specific use case, it seems that further refining the Speech Script skill to better synchronize with speech in multiple languages would be the most suitable approach.

To address the synchronization issue, you might consider the following strategies:

Phonetic Timing: Develop a system that assigns different timing values to phonetic elements or syllables rather than individual letters. This could lead to more natural synchronization, as phonetic elements often correspond more closely to actual speech sounds and durations.

Language Detection: Implement a language detection mechanism that adjusts the script's timing based on the identified language. This could involve setting different timing parameters for each language you expect the robot to encounter.

External Libraries: Explore the use of external speech processing libraries or APIs that can provide detailed phonetic analysis and timing information. These tools might offer more accurate synchronization by analyzing the speech in real-time or pre-processing the audio.

User Feedback: Collect feedback from users interacting with the robot in different languages to identify which phonetic elements or words require timing adjustments. This data can help you fine-tune the script for better synchronization across languages.

Continuous Improvement: Continuously monitor and adjust the script as you encounter new languages or dialects. This iterative process can help you refine the synchronization over time.

By focusing on these strategies, you can enhance the Speech Script skill to provide a more synchronized and multilingual experience for users interacting with your robot.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

All robot skills allow up to 4096 servos (I believe this is the max, but you can request more if needed) per servo connection. Press the ADVANCED button next to the servo selection in the Talk servo robot skill. This is a standard UI for all robot skills in the ARC environment.

Learn more about configuring servos with ARC here: https://synthiam.com/Support/ARC-Overview/Servo-Controls

Thank you for the information. It's important to note that the Talk servo v2 robot skill indeed allows for the configuration of multiple servos, which can be beneficial for creating more expressive and dynamic movements in a robot. By utilizing the ADVANCED button next to the servo selection, users can configure additional servos for various functions such as eyes, eyelids, and head movements, enhancing the robot's ability to communicate and interact in multiple languages.

For those looking to create a multilingual robot experience, it's recommended to explore the advanced servo configuration options available in ARC and to continuously refine the scripts and timing to achieve the desired level of synchronization and language support.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

If Fantomas needs to use Speech Script due to not using English, he will have to modify the example script for the phonetics of his language. What modifications have you made to the script for your language, Fantomas?

@Athena, do you have any recommendations for modifying the default speech script for the German language?

To modify the default speech script for the German language, you would need to consider the phonetic characteristics of German and adjust the timing and servo movements accordingly. Here are some recommendations for adapting the script:

Phonetic Analysis: Break down German words into phonetic components and determine the duration of vowels, consonants, and special characters. German has more vowel sounds (including umlauts ä, ö, ü) and consonant combinations (like "sch", "ch", "st", "sp") that may require different timing.

Vowel Duration: German vowels may have longer or shorter durations based on whether they are short or long vowels or followed by certain consonants. Adjust the

sleepduration in the script to reflect these differences.Consonant Clusters: German has several consonant clusters that may affect the movement of the jaw servo. For example, "sch" might require a wider opening than a single consonant like "t".

Sentence Rhythm: German is known for its rhythmic flow, and sentence structure can influence the timing of servo movements. Consider the natural pauses and emphasis in sentences when scripting movements.

Lip Rounding: For vowels like "o" and "u" and the umlauts, consider adding servo movements that mimic lip rounding, which is more pronounced in German.

Special Characters: Umlauts and the sharp "s" (ß) have unique sounds in German. Ensure that the script accounts for these by adding specific conditions and movements.

Testing and Refinement: Test the script with a variety of German phrases and words. Collect feedback on the naturalness of the movements and refine the script accordingly.

Here's an example modification for German vowels:

Remember to adjust the

sleepvalues based on the actual speech rate and the specific servo being used. Testing with native German speakers can help fine-tune the movements for a more realistic appearance.I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Yes, we can add more, but it will still function as one servo drive. Essentially, it's the perfect setup if there's a robot concert where they sing a song in chorus. Perhaps such synchronicity with this skill would be ideal. However, I won't be able to assign vowels to one servo and periods or pauses to another servo. That's why the 'Talk servo v2' skill could be described as working with a single servo drive, responsive only to vowels in the English alphabet.

so "Speech Script" would be correct. There is access to settings using a script.

Do the changes to the script provided by Athena not help you?