I found this robot on Thingiverse; www.thingiverse.com/thing:2471044 and thought it would be a fun project. Check it out they've really created a great robot. Printing it takes about 70 hrs. and then you need to go shopping for baking supplies (Raspberry Pi's and such). So I thought why not make it run with EZ-Robot hardware (most people here have lots of that) and software. I modified the camera holder to accept an EZ-Robot camera and the arms to accept EZ-Robot HDD servo horns and connected everything to an IoTiny. Building it was pretty straight forward. Programing it to solve the cube was another matter, so I got ahold of forum member ptp and asked if he would be interested in helping out and he was. He doesn't have a 3D printer however so I built him the robot and sent it to him. He has been busy working on an EZ-Robot plug-in to solve the cube as well as calibrate the arms and grippers. We are hoping to have the Plug-in ready to share by the end of the month, so start printing. This would be a fun project for both kids and adults. We'll keep you posted.

Other robots from Synthiam community

Smarty's Halloween Synthiam - Annabell

Jstarne1's Meet Dusty , Modded Omnibot 4 Home Security Cam,...

Ooooh i saw you post an EZ-Cloud app about this and wondered when we'd hear more. This is very exciting

@Bob, I saw this on Thingiverse a while ago, and thought about building it. It wasn’t until I read into it more I realized that to get the s/w to make it fully functional you had to subscribed and pay for it.

It’s absolutely fantastic you have modified it for the EZB and that ptp is creating the plugin and the calibration for this, as it looks extremely complex.

I take my hat off to both of you guys, this is GREAT work!

Chris.

cool project. i wonder if the ez-robot six could be modified with new claws if it would have the range of motion and clearance to solve a cube. Remove two legs and move the extra 4 servos to the other 4 legs (1 each), move the camera and then add the new claws and a stand.

Very nice Bob. That's a lot of work. I'll definitely put that on my build list. I see a discarded inmoov arm off to the right. Did your inmoov have to donate his hand servos?

Thanks everyone for the support!

A special thanks: @Bob, a great challenge and thank you for your donation (print time, print materials and shipping!).

Another thank you to the original creators (otvinta3d).

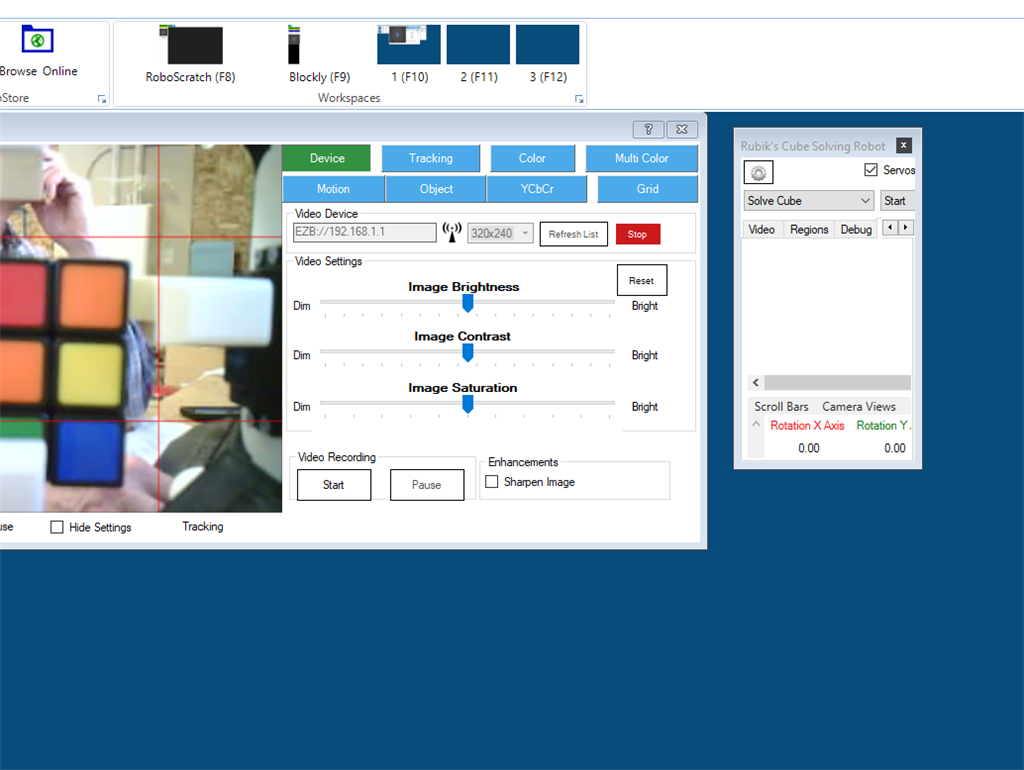

The building is well documented. Bob did some adaptions for the EZ-Camera, and adjusted the grippers and is still working on more adjustments.

I got some showstoppers:

I recommend the EZ-Robot HDD (digital) servos, unfortunately i run out of EZ-Robot HDD servos, so I'm using half half and I'm testing some alternatives. So far the best option are the EZ-Robot HDDs no buzz and smooth operation.

I broke an EZ-Robot camera trying to fit inside a shell case, please be careful adapting the existent hardware.

The initial Grippers are narrow, i file them but they are not even, so they deform the cube.

Plugin road-map: I'm working to solve the Cube, but, the plugin can also be used to execute cube rotations, query vision details and develop a solving solution.

@Nink

Regarding the Six and other similar ideas. Nothing is impossible, but we can't forget we are using hobby servos without any position/torque feedback, limited accuracy.

With those conditions, is almost impossible to dynamically align the movements on the fly.

If you can create a setup, and replicate repetitive movements without losing positions, we can move to the next problem: Software.

Ok great point @ptp I was just wondering how others could participate easily. I can’t envision EZ-Robot producing a robot cube solver but I could envision a claw that snaps onto an existing model.

The calibration issue is a concern, the dynamixels sensors make it so easy to calibrate but adding pots to obtain sensor data to hobby servers is not a simple process. I was able to pull position data from the Meccanoid servos but they have no torque so not ideal.

i think we need to solve the calibration issue in general. The home position that EZ-Robot uses is great but it is a complex manual process to align every servo and they quickly become unaligned.

There are smart folks here. How can we autocalibrate hobby Servos? Maybe it is an optical sensor alignment addon, sensors move until we get a lock. This may be as simple as an infrared reflector on the servo arm that an infrared led can fire off and be picked up by a sensor. (This could be quite accurate). Maybe a rough estimate could be done with visual recognition and the EZ-B camera. Triangulation maybe as simple as 2 cameras or perhaps 1 camera and 2 markers on the servo arm.

I have not given the external calibration issue any real thought but I have to think it is solvable. Maybe not for this problem but perhaps just for an auto calibrate when ARC is powered on would be ideal.

I have done this project using the Pololu servo controller, but willing to help out where I can here.

If your looking for serial/feedback servos, take a look at: (don't mean to take away from the good HDD servos from EZ-Robot. LewanSoul LX-16A Full Metal 17kg High Torque Serial Bus Servo