Hey there!

Here is my latest project. This time it's an emotion generating project. Through speech recognition activation, your robot will respond with emotions. Happy, sad, angry or tired, they are all there.

It is built as a development project, so you guys can continue the development.

It works by having an always running main script checking values. Those values change based on what emotion you want. Happy? Set $happy true and all others false. Sad? Set $sad true and all others false.

Currently, Emotions has integrated the Personality Generator, and RGB Animator to help bring personality to your robots. Currently, it is best compatible with the JD Revolution robot as it has the RGB sensor.

Further development can be done by me if people who are less script skilled want something added.

Unlike my most recent project, Emotions is all built into Ez-builder, nothing extra. Simply find the file in the EZ-Cloud or in this thread, download it and your off!

To add emotions is a little difficult as you must set it to false by every script that wants a different emotion. As it stands, that means adding an extra variable to to at least 8 other scripts. And you have to add its call on in the "Emotion Manager". But once that's done(a 10 minute ordeal at best) your good to go!

If you want to add actions to an emotion, simply click the edit button of the emotion in the "script manager" and add the command to start that action.

I hope you guys like Emotions, and cant wait to see the robots using it!

Disclaimer: This project is untested with robots. It may not work correctly with your robot at first. Use at your own risk. The creator under the alias Technopro is not responsible for any damage as a result of using this project.

Enjoy your day!

Tech

Discover more robots

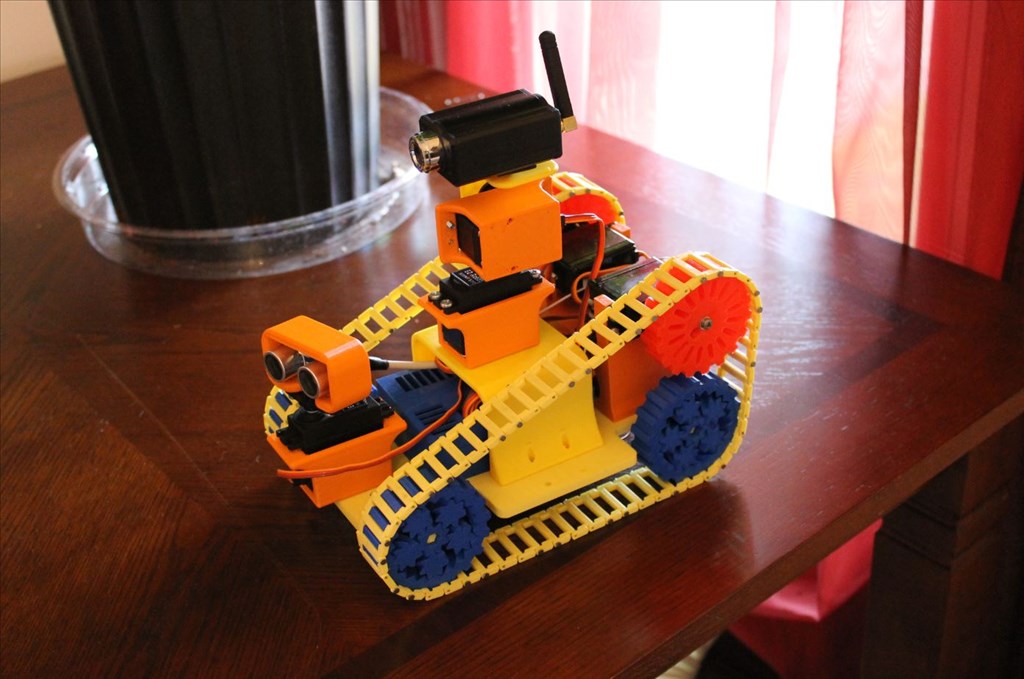

Halbinath's Traxbot - My First Robot

Gwen4156's G-Bot Video

This looks interesting and sounds like a fun project, but I'm curious to why you have posted it without testing it as mentioned in your disclaimer?

Tested with a robot I meant to imply. Will change in first post. Thanks.

Hi @Technopro, I'm a little confused about some of the coding and control choices, I was wondering if you could help me understand them?

So, we have the voice commands to interact with the robot, which sets a variable for the emotion and then kicks off the "Emotion Manager" script to run that then runs the specific emotion script which activates the RGB LED animator sequence. I don't understand what the personality generator is doing?

Did you choice to code all of the variables with true/false values in every voice command because it would not work any other way? I probably would have just set specific emotional state to true, then after the specific emotion has ran I would have set it to default back to a false state after a period of time. That would have been less coding, but it would also change the way it functions a little bit. With your coding, it would leave the robot in that emotional state until a new voice command was give to change the emotional state, correct?

Please don't take the questions as a negative on your code, I'm enjoying your project and appreciate you sharing it. I'm just looking to learn more about it.

Techno,

:-)

@Justin

I am unsure if it could be done any other way, as the variable for the emotion must be set true, and then the rest must change to avoid a conflict. As for the dynamic "auto emotion" I wanted to incorporate that eventually. What's nice is that at any time the emotion can be changed. And speaking of which, I think I thought of a bug with the code which could prevent that.

@Moviemaker

Thanks! Good to hear from you again.

@Technopro, it looks like all the emotions are set as "false" by default, so if the voice command sets the emotion to "true", then once the emotion runs and completes it could set itself to false. That function does not appear to happen now, which is fine if that is intended.

When I think about my robot displaying emotions, I think of most of them being displayed for a limited amount of time, like a person would. Like if I saw an old friend I might be "surprised" and smile really big for a little bit, but my emotional display would go back to default or neutral.

The way I set it up now is sort of a hit or miss as the emotions manager restarts after every emotion change, but that before loop area sets all emotions false so if one emotion does output its because the variable took long enough that the rest of the manager script could run and activate it. Changing the process.

And It's changed! Change notes can be found in the project. Also updated the EZ-Cloud version. Look for EMOTIONS V2 in the ez-cloud.

Download: EMOTIONSV2.EZB

@JustinRatliff Emotions now time out after a set-able amount of time in the @period script.