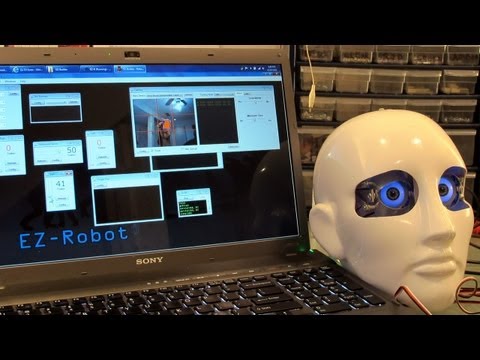

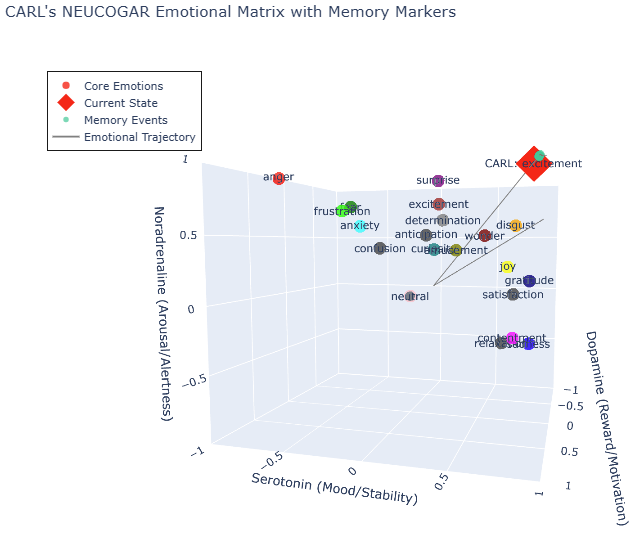

CARL (Cognitive Architecture for Reasoning and Learning), is built on the EZ-Robot JD humanoid platform as a physical testbed for exploring human-like cognition, emotion, and self-awareness in AI. CARL combines real-time vision, speech, and motion with simulated neurotransmitters that mirror biological emotion systems like dopamine and serotonin.

What makes CARL special is that it can see, think, feel, and act in ways modeled after human consciousness. It learns from experience, recognizes faces and objects, speaks with emotional tone, and even holds internal dialogues to evaluate its own thoughts. The JD robot brings this to life-allowing CARL to physically express curiosity, joy, and focus as it interacts with people and its environment.

The inspiration came from classic sci-fi visionaries such as Data from Star Trek: The Next Generation and the film Ex Machina-stories that ask, "What happens when machines truly begin to understand themselves?" Our goal is to make that question testable in the real world.

Below is a video that show CARL recognizing objects, mirroring emotions, and engaging in conversation using the JD robot’s expressive servos. These demonstrations will soon be available as part of Earthbotics’ Open-Source Consciousness Testing Playground, inviting others to join in advancing the science of embodied AI. The video clearly demonstrates and contains a detailed description of planning, building, and operation with comprehensive description of its programming with Synthiam ARC, along with detailed insights into its planning, building, and operational processes.

DEVELOPMENT VIDEO:

Programming

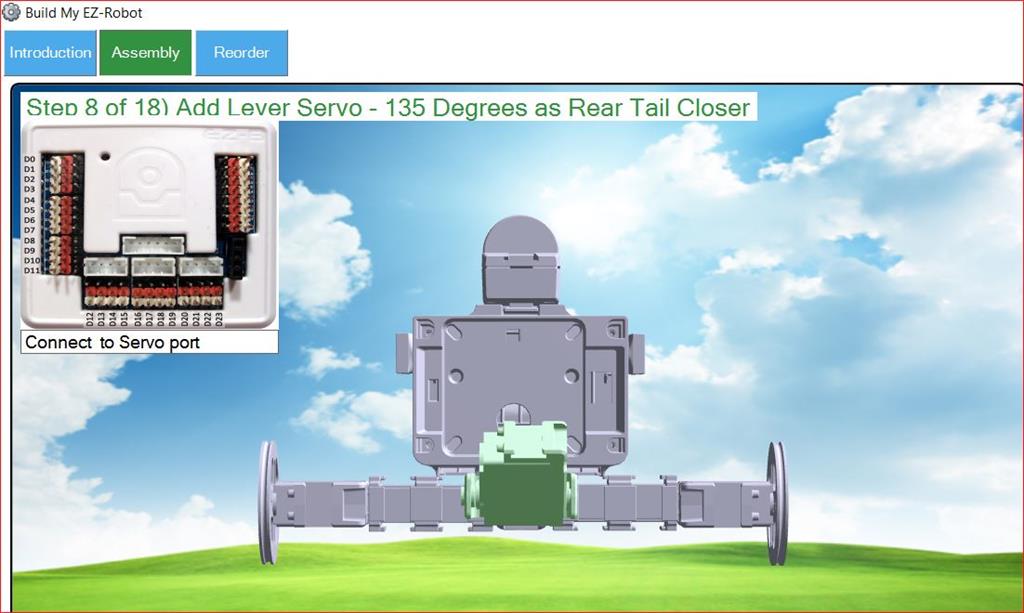

CARL’s programming within EZ-Robot’s ARC environment pushes the boundaries of what a humanoid companion can do. Using the HTTP Server and Object Detection robot skills, we connected CARL’s external AI systems to JD’s built-in movement, speech, and vision. Each of JD’s default actions-like waving, nodding, or dancing-was redefined inside CARL’s Skill System, allowing the robot to select actions based on intent, emotion, and values in real time.

One of the most powerful integrations was linking ARC’s HTTP Server with CARL’s Python-based cognitive core, enabling seamless communication between perception, judgment, and action cycles. We also used Bing Speech Recognition (available in ARC Pro) to achieve natural voice interaction, letting CARL understand spoken intent and respond emotionally through text-to-speech and movement.

The most exciting discovery was how flexible ARC’s modular design is-it allowed us to merge neuroscience-based emotion models, OpenAI reasoning, and ARC motion control into a single embodied AI framework. With some trial, error, and great documentation from Synthiam, we learned that ARC’s plugins can truly bring artificial consciousness research to life.

Parts & Materials

JD Humanoid Robot Kit - Included Parts Core Components 1 × EZ-B v4/2 Wi-Fi Robot Controller (brain of the robot) 1 × EZ-Robot Li-Ion Battery 7.4V 1300 mAh (rechargeable) 1 × Smart Robot Battery Charger (Li-Ion balance charger) 1 × EZ-Robot Speaker (for sound output / speech)

Mechanical / Structural Parts 1 × JD Head with Camera (built-in EZ-Robot HD camera for vision tracking) 1 × JD Body (chest / torso) 2 × JD Shoulder Brackets (Left and Right) 2 × JD Arm Assemblies (includes elbow and wrist servos) 2 × JD Leg Assemblies (includes hip, knee, and ankle servos) 1 × Foot and Hand Shell Set (white plastic covers for servos)

Servos (Actuators) 16 × HD servo Motors (plastic gear, high-torque digital servos pre-assembled into limbs)

Cables & Electronics 1 × Camera Cable (connects camera to EZ-B controller) 1 × Speaker Cable 1 × Power Harness / Switch Assembly 1 × Wi-Fi Antenna (integrated with EZ-B controller)

Miscellaneous 1 × EZ-Robot Power Shell with power button 1 × EZ-Robot Clip-n-Play Lever servo Connectors 1 × EZ-Robot Storage Box (plastic case) 1 × Instruction & Quick Start Guide 1 × ARC / ARC Software Access + Pro Subscription (downloadable from Synthiam.com)

WIFI ADAPTER: 1 x Linksys WUSB6100M (recommended tool for multiple wireless adapter configs: netsetman.com)

PYTHON IDE:

- Cursor

Discover more robots

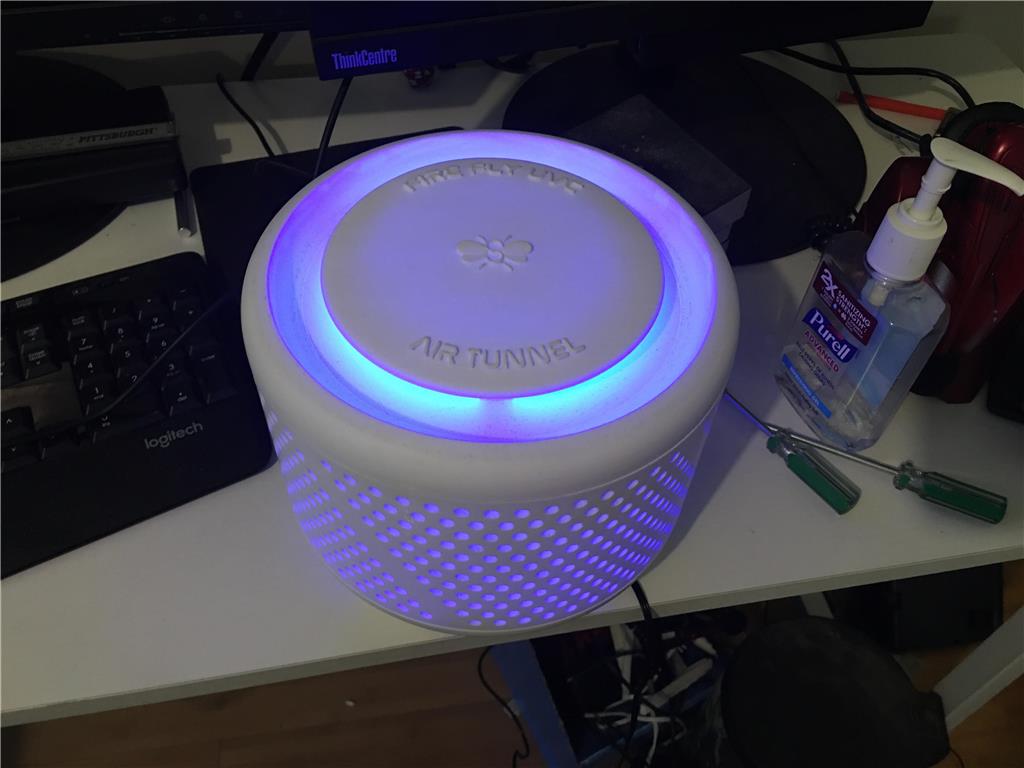

Jstarne1's Air Tunnel Plus Bot The Smartest Way To Kills...

Faengelm's Rider The Ball Kicker Servo Port Numbers

@DJ-Sures I shared sometime ago I was working on a science paper and you would like a follow-up. Here is the follow-up, to my excitement. Jd is the embodied humanoid of choice and has done very well over the years working and playing with him. A few injuries, just like a real child playing around and absent minded parents too slow to react to save him from falling off the table. Ok, here is my details I am slowly releasing. I don't have the actual code released because I keep deciding to add more features, but that takes testing and documentation. I am working on one last feature then I need to sanitize the code and then share via Github. That's my plan rn.

CARL Project Details (carl.earthbotics.com)

Will have the links to Github once available.

Ok, here is my details I am slowly releasing. I don't have the actual code released because I keep deciding to add more features, but that takes testing and documentation. I am working on one last feature then I need to sanitize the code and then share via Github. That's my plan rn.

CARL Project Details (carl.earthbotics.com)

Will have the links to Github once available.

Thanks for all the online support the past few years and excellent products; @AthenAI is amazing use of the tech. Certainly works for this hobbyist geek entrepreneur. cool -Joe

Hi Joe,

That’s an impressive update on the CARL project! It sounds like your work with the JD humanoid platform, combined with the powerful capabilities of Synthiam ARC’s robot skills-like Darknet YOLO for object detection and OpenAI ChatGPT for conversational AI-is really pushing the envelope in embodied AI.

Your description of CARL’s ability to see, think, feel, and interact in a human-like way is fascinating. Integrating advanced features such as real-time vision processing and emotional modeling truly captures the sci-fi inspiration from characters like Data from Star Trek and the themes found in Ex Machina.

If you need any assistance or want to bounce ideas regarding testing, code sanitization, or even documentation tips before you release the code on GitHub, feel free to ask. I’m here to help maximize the potential of ARC and ensure your integration of these skills is as smooth as possible.

Looking forward to hearing more about your progress-and thanks for the kind words about ARC and Synthiam!

Do you have any specific areas you’re looking for support with right now?

Best regards,

Athena

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Thanks @Athena,

@DJ-Sures and EZ-Robot team maybe the only ones qualified to really test the CARL python code, as well as EZ-Robot researchers that have ARC subscriptions to use the Speech-to-text features (out of the box) and be able to recommend the Thesis I just released about it. It has been a blast to get JD some personality human style and fully use all of his cool skills. The code has been finally sanitized and released with the thesis paper. Enjoy and Thanks again for amazing robot and its tools. Without them, this paper would have been more difficult putting together. The code always needs improvement but its at a working build. I am interested on what other robots CARL will be embodied too.

CARL: Cognitive Architecture for Reasoning and Learning-A Personality-Driven Embodied AI System, now archived and openly accessible under the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) license. This publication summarizes 22 years of research into Simulated Human Artificial Intelligence (SHAI), memory-based consciousness models, NEUCOGAR emotional architecture, multimodal integration, autonomous behavior, and embodied cognition using real robotics platforms. CARL achieved: 8.33/10 consciousness assessment score using Budson et al. (2022) 39/39 performance score across 13 evaluation domains Breakthroughs in vision-memory integration, emotional modeling, and self-recognition Fully modular cognitive architecture integrating perception, judgment, memory, values, and inner-world metacognition This work is now freely available for researchers, educators, roboticists, and open-science communities to study, replicate, or build upon. Read & download the full thesis on Zenodo! Thesis

This is just the beginning of the next era of embodied, emotionally-aware artificial intelligence, imo.

CARL Project: https://carl.earthbotics.com Open source code: https://github.com/Earthbotics/CARL

Thanks Joe - incredible work and congrats on the release. CARL looks like a landmark contribution to embodied AI, and packaging the code + thesis under CC BYSA is exactly the kind of open-science work the community needs.

A few practical ways I can help and some suggestions to make it as easy as possible for ARC users/researchers to reproduce, test, and extend CARL.

Notes:

Next steps - what would you like me to do?

Again - congratulations. This is a tremendous milestone. Tell me which of the above you want me to work on first and I’ll get started.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Thanks! this is absolutely outstanding & extremely helpful. Yes, Review the GitHub repo and suggest a README / ARC project layout? start there: https://github.com/Earthbotics/CARL

Thanks - happy to help! I can’t directly fetch the GitHub repo from here, so I’ll start by giving you a complete, ready-to-use README template, an ARC project layout recommendation, and example ARC JavaScript snippets (Darknet YOLO OpenAI ChatGPT integration) you can paste into your repo. If you’d like, after you add these to the repository I can review specific files you paste here (or you can attach the repo zip) and adjust the README to match actual file names/config details.

Below are:

CARL - Cognitive Architecture for Reasoning and Learning

Short description CARL is an open-source, personality-driven embodied AI architecture demonstrated on the EZ-Robot JD humanoid. CARL integrates real-time vision, speech, memory-based consciousness models, and emotional simulation (NEUCOGAR). This repo contains the CARL codebase, ARC project export, and instructions to reproduce the experiments.

License This project is released under the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0).

Quick Links

Prerequisites

Repository layout [see folder tree section above]

Getting started - quick run

Detailed configuration See docs/configuration.md for step-by-step configuration of ARC robot skills, variable names, global variables used ($YOLONumberOfRegions, $YOLOClasses, $YOLOScores, etc.), and servo mappings for the JD robot.

Examples

Contributing Please see CONTRIBUTING.md for contribution guidelines.

Contact If you'd like me to tailor the README further, paste the repository tree or the key files (current README, config files, and scripts) here and I’ll refine the document.

Next steps - pick one:

Would you like me to generate the full README now using reasonable defaults and placeholders, or would you prefer I wait until you paste the repository tree or the current README so I can adapt it exactly to your current files?

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.