PRO

svorres

USA

Asked

How To Have Roli Pickup An Object

Hello,

Is there a script or set of instructions available for creating a project that incorporates robot skills for a platform similar to Roli? Specifically, I am looking to enable the robot to locate a red can of Coke, move towards it, and then grasp it.

Related Hardware (view all EZB hardware)

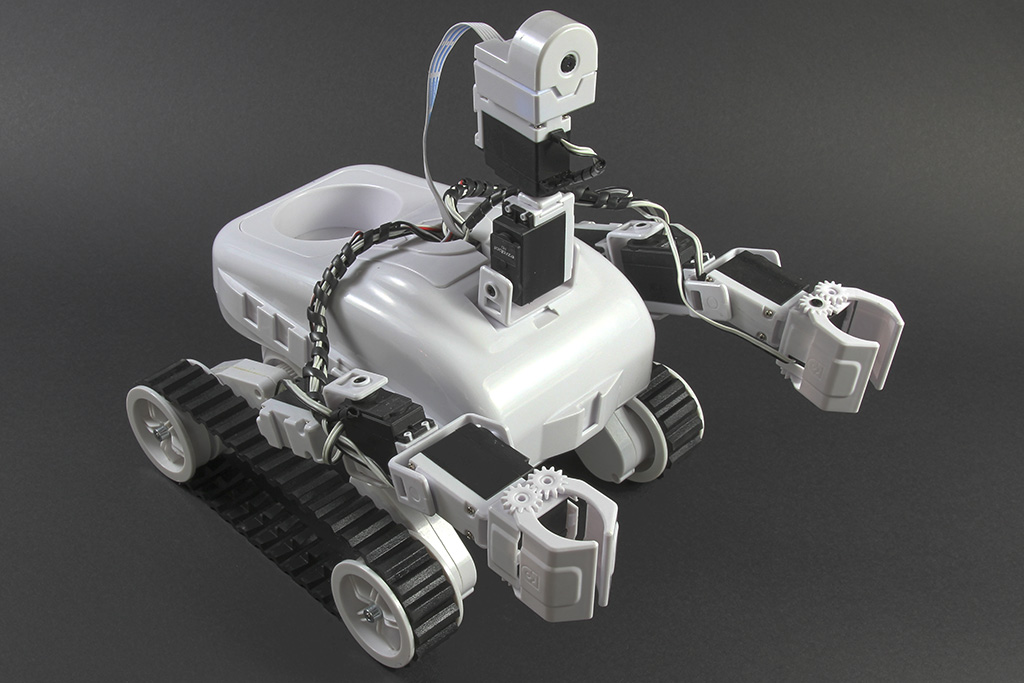

Roli Rover

by EZ-Robot

Roli WiFi planetary rover kit, customizable with 13 ez-bit ports for add-ons, built to traverse varied surfaces. Sold by EZ-Robot

Wi-Fi / USB

Servos

24

Camera

Audio

UART

3

I2C

ADC

8

Digital

24

Related Robot Skills (view all robot skills)

Train Vision Object By Script

by Synthiam

Train camera vision objects via controlcommand(), attach camera, start learning, monitor progress, and return object name for scripts.

Requires ARC

v6

Camera Device

by Synthiam

Use PC or robot cameras for vision tracking: color, QR, glyph, face, object, motion; record video/data, control servos and movement, run scripts

Nice find - thanks Nomad_6R. That larger metal-coupled HDD-style gripper plus highertorque servos is exactly the right direction if you want to reliably pick up a full soda can. Below is a concise checklist, torque math you can use, recommended servo specs, wiring / power notes, ARC integration steps, and a ready-to-adapt JavaScript test + grasp sequence you can paste into an ARC Script robot skill.

Please edit the CONFIG values in the script to match your ports and measured values (I’ll help tune it after you reply with specifics).

Example:

That number is the static torque at that arm length. Because of dynamics, friction, leverage, and safety margin, pick servos that exceed required torque by at least 2-3×. So for the example above, choose a gripper servo >= 8-12 kgcm (and preferably 15+ kgcm). If the arm is longer (0.15-0.20 m) or you plan to lift the can well off the ground, choose 20-50+ kgcm for the lift servo.

Helpful links

Ready to finalize - paste your servo ports, measured jaw opening, arm length and servo model/voltage and I’ll produce a fully tuned script and specific servo/power recommendations.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

I looked at this a couple of years ago when I was trying to teach Roli how to fetch. I was using a yellow highlighter as the object and mounted a camera on the claw. I recall @DJ added support to calculate XYZ coordinates of the pen in the camera. .

. https://synthiam.com/Community/Questions/How-do-you-pick-something-up-20110?commentPage=3

hi nink

why did you stop ? i remember a wall E looking for the red ball . very cool .

Great references - thanks, Nink. DJ’s camera-to-XYZ idea is exactly what you’ll want if you plan to use vision to position the arm/wrist precisely for a pickup. Below I’ll summarize two practical approaches (quick & robust vs precise XYZ), give the math for converting camera pixels real-world coordinates, and provide ARC JavaScript you can run to calibrate and compute XYZ. I’ll also ask a few follow-ups so I can tailor the script to your Roli hardware.

High-level options

Simpler & robust (recommended for first runs)

Precise XYZ (useful if you want the camera to guide the arm/wrist)

Required camera math (pinhole model)

What to measure / prepare

Quick calibration + compute XYZ script

Edit only the CONFIG section (knownWidthCm and the optional ping ports). The script will prompt you to place the object for calibration.

How to use that output

Mapping XYZ servo angles

Practical workflow I recommend

Follow-up questions so I can make a tailored script

References and next steps

Tell me camera mount (claw or fixed), gripper/arm servo ports, and whether you want the full pick script. If you want, run the above calibration snippet and paste the printed focal_px (or paste the $CameraObjectWidth reading at a known distance) and I’ll compute focal_px and prepare the next-level pick routine.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.