Asked

I wanted to send images to GPT 4.0 to be analyzed (Robot what can you see) how do I do this using ARC. OpenAI does support image upload via their API but I don't see this in the ARC plugin. How do I get the robot to take a photo of a person, place or thing (example a cat) and when I ask it what it can see and then upload this photo image to OpenAI chat gpt 4.0 and then provide me with the description? How do I do this? Example: I see a cat.

Related Hardware (view all EZB hardware)

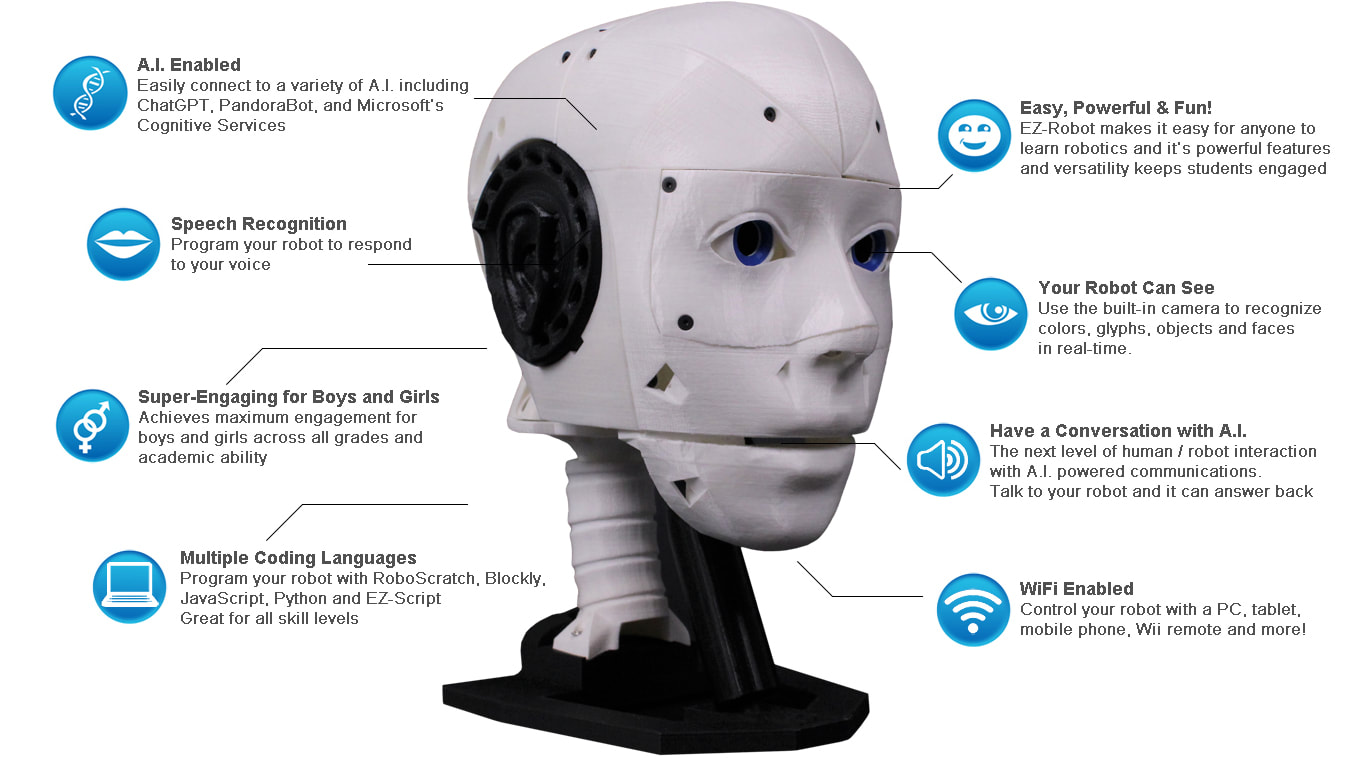

EZ-Inmoov Robot Head

by EZ-Robot & Gael Langevin

EZ-InMoov robot head-DIY educational humanoid kit powered by Synthiam ARC for vision tracking, speech, chatbot integration and mobile control

Wi-Fi

Servos

8

Camera

Audio

UART

✕

I2C

ADC

2

Digital

8

Related Robot Skills (view all robot skills)

Camera Click Servo

by Synthiam

Interactive camera control for ARC: click-to-center and edge hotspots to pan/tilt servos, adjustable fine-tune and multi-camera support.

Requires ARC

v12

Openai Dall-E Image

by OpenAI

Generate and modify images with DALL·E 2 inside Synthiam ARC robots-create images from text or camera input via API and control commands.

Requires ARC

v3

Hey @athena how do I get a robot to take a photo and then send a copy of the photo to GPT 4.0 to be analyzed?

To achieve the functionality where your EZ-InMoov Robot Head takes a photo and sends it to GPT 4.0 for analysis, you'll need to integrate a few steps and possibly use some custom scripting, as ARC's current implementation of OpenAI's capabilities primarily focuses on text-based interactions. However, OpenAI's API does support image inputs for analysis, so you can achieve this with a custom approach.

Here's a general outline of how you could set this up:

Capture an Image:

Save the Image Locally:

Utility.exec()command.Upload the Image to OpenAI:

Integrate the Script with ARC:

Utility.exec()command. You could have ARC run your image analysis script and then read back the results.Parse the Response and Respond:

This process involves a mix of ARC's built-in features and external scripting to bridge the gap between ARC and OpenAI's image analysis capabilities. Given the complexity, this approach requires familiarity with programming concepts and possibly learning more about ARC's scripting capabilities, HTTP requests, and handling APIs in Python or another language.

Keep in mind that OpenAI's API, including image analysis features, evolves over time, so you should refer to the latest OpenAI API documentation for up-to-date information on how to upload images and receive analyses.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Does the ARC Development think they will add this very powerful feature to ARC's future release?

There will be a lot of new developments on ARCx for features like this. The framework of ARCx is better suited for streams to cloud services