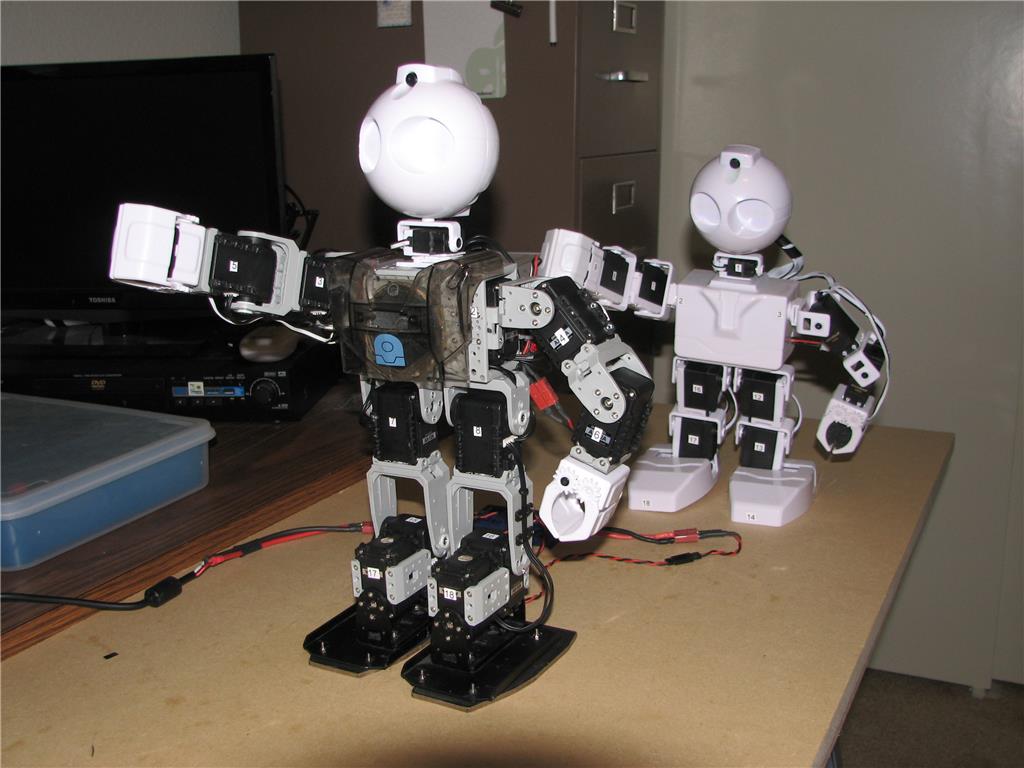

We had been working on plugins for the Microsoft Cognitive Services, and I was testing them with the JD Humanoid. This ARC project uses a few controls, including Bing Speech Recognition, PandoraBot, Microsoft Vision and Microsoft Emotion Cognitive Services. This may sound confusing, but we made it super easy to use.

Here's links to the ARC controls that I used for this awesome robot...

Camera Device manual: https://synthiam.com/Software/Manual/Camera-Device-16120 This is the control that connects to a camera. In this case, i used the built-in camera on the JD Humanoid robot

Bing Speech Recognition manual: https://synthiam.com/Software/Manual/Bing-Speech-Recognition-16209 This allows any spoken word phrases to be converted into text. It uses a magical microsoft service to do so. The audio is sent to their server, and returned is a variable containing the speech as text. The text is parsed through a number of IF ELSE conditions to perform things like run the cognitive vision, or run the cognitive emotion. Otherwise, all other text is sent to the pandora bot engine (or you could use the AIMLBot as well)

Cognitive Vision manual: https://synthiam.com/Software/Manual/Cognitive-Vision-16211 This control takes the image from the camera device and processes it using the microsoft cognitive vision machine learning magic. It returns a string of what the camera image detected. I use the SayEZB() command to speak the returned string out of the robot speaker.

Cognitive Emotion manual: https://synthiam.com/Software/Manual/Cognitive-Emotion-16212 This is similar to the cognitive vision control, except it processes a human face and returns the emotion. I use the SayEZB() command to speak the returned string which contains the emotion (i.e. happy, sad, angry, etc)

Artificial Intelligence one of these two artificial intelligence personality options...

- PandoraBots: https://synthiam.com/Software/Manual/PandoraBots-16070

- AIMLBot: https://synthiam.com/Software/Manual/AimlBot-16020 These

Discover more robots

Ezang's Life Is Like A Tight Rope, Do You Agree With This?

Tachyon's New Bot, Different From The Old Bot.

Waw, I'm impressed ! Good job !

Nice ! That's pretty much the whole project I had in mind with the Hexapod robot.

I'm impressed by the latency of the request. You're actually just using the cloud for the voice recognition in this demo video ?

You got it - it’s the bing speech recognition that uses the cloud. Then it pipes the recognized text back to the cloud for pandoraBot chat AI

The image recognition is also in the cloud.

Download the software from this website and those skill controls are built-in with the installation

Onde fica o link para baixar esse software?

The link is on this website at the top menu titled SOFTWARE