Andy Roid

USA

Asked

— Edited

Any updates on the indoor navigation system, camera and beacon system? There was discussion it was in the works. Just curious. Ron

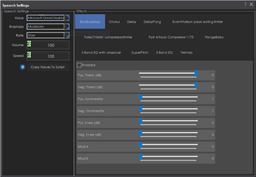

Speech Synthesis Settings

— Configure Windows Audio.say()/Audio.sayEZB() TTS on EZB#0: voice, emphasis, rate, volume, speed/stretch and audio effects; copy control script.

Try it →

Speech Synthesis Settings

— Configure Windows Audio.say()/Audio.sayEZB() TTS on EZB#0: voice, emphasis, rate, volume, speed/stretch and audio effects; copy control script.

Try it →

Any updates on the indoor navigation system, camera and beacon system? There was discussion it was in the works. Just curious. Ron

I believe the recent update to ARC which allowed 255 EZ-B controllers to be connected to an ARC session was a step toward doing this. I could be wrong, but it feels like it was.

Dave.. I got you overview in the other discussion on web cams. I was wondering if the separate ezb with camera would still be a better way to go rather than trying to track using the motor/encoder system and a rf signal. I have no real programing knowledge but wondered if a grid system (coordinates) could be made from camera data to navigate a robot. Then onboard controller could track from current point to program point. Kind of a radar navigation. A infrared signal could identify the room to identify a map of coordinates to travel through. Am I on the right track? Ron

I think that the best solution is both. A camera can be used outside of the robot to tell the location of the robot. I think that right now the best way to detect objects is through echo type systems. Eventually I would like to move from these to echo systems to vision systems. This is the only platform that I know of that gives you all of the options you could dream of.

Echo systems have their disadvantages. Try to get an echo sensor to try to respond to a fur covered piece of furniture. The camera can see it without any issue. The camera has to be able to recognize what it is though. Once the training is done, the camera is superior. Now, what if the piece of furniture is replaced. The camera has to be retrained, but the echo sensor doesnt.

If they were used in conjunction with each other then the camera could train itself with some programming.

This is what I will be working on when I get Spock mobile. There is a connect on Spock that I will be playing with, along with 4 cameras in the front, one on each side and one in the back. It also will have 8 echo sensors around the base and possibly a pir. I still think that I will put multiple cameras in each room to triangulation the position of Spock in a room. The triangulation data along with the sensor data on Spock should provide enough data for reliable self navigation. The encoders on the wheels will provide accurate and reliable movement data.

It may be overkill, but I like redundant solutions that work together. Will just have to see how it goes.

Hey guys , you may want to take a look at the QR code based navigation videos I did. You can use a camera pointed at the ceiling and print QR codes and place them on the ceiling. Each time it reads one it can be a xy coordinate of your homes floorplan. If you want to get fancy you can make the QR codes using IR or UV reactive ink to make a "mostly invisible" QR code marker.

Hi Dave and Jstarne1. Like j's idea my first concept step is to plot a track across a room to a destination. I want to use a camera on board an adventure bot, to track to a Colored Wall Marker (also using a echo sensor). Second step is a colored beacon or marker on the robot and two cameras mounted 90 degrees apart on the walls in the room preferably web cams directly connected to the computer, mounted on servos to give location in the room or track (X & Y travel). This gives me a track with marker points which can be used to set location on X and Y. What do you think? Can it be done and could it work? My code skills are zero but I know I can make the basic parts work. My end goal is coordinates not destinations. This way you travel to coordinates. Ron

I won't be putting stickers on the walls. To me it is very uninviting to have people over wondering why we have all of the stickers all over the place. The goal for me is to be able to have a system that can be dropped in place in any location which would allow the robot to map and navigate a house or business with minimal setup.

Most of my proposed solution would be database configuration for setup which would allow an application to be developed that can make this easy on the user. The "charge pad" idea also becomes quite possible.

Stickers to me wouldn't be inviting. I'm able to read them and use them for sure but they would be limited to things like verifying that I'm lined up with the charging station pad before moving onto it.

I believe that 2 cameras could work but 3 would be better.

If you knew the size of the object you were tracking (in pixles) at say 10 feet from the camera, you could calculate the distance from each of 3 cameras based on the size of the object in all 3 cameras. This would allow you to then know where the object is. You wouldn't be able to do this with 2 cameras but you would be able to know roughly where it was.

The stickers were for proof of concept using 1 camera. Like you say in your last statement cameras only is the goal. (Similar to Josh's meathod).

I am busy with a work project again but the mind is going...

I want to try simple things like a target on the bot and the remote camera on a servo and use the servo position to determine a value of 1 to 180. If it works I have an "X" location. I would assume the robot camera tracking to a fixed point (test device colored paper) and a sonic distance will give me the "Y". Basic navigation? When I get some time I will try it with my adventure bot. The third camera? I would need your thoughts.

Dave, you and I are on the same page but you software knowledge is WAY beyond my grasp. I am trying to do it the stone age way, LOL. The least amount of math is best for me..

I know DJ has something up his sleeve. I hope it comes soon.

Ron