bonreed

USA

Asked

Hi Fellow Roboters! I have a question I am a newbie and I have followed the precse instructions for emotions recognition using Blockly. However, the Cognitive Emotion plug in states it does not recognize a face? Also in Blockly, Six will only say "I think you are feeling". That's it I added the EmotionalDescription variable but it does not say any emotion.It says I think you are feeling zero or once it said I think you are feeling neutral? Suggestions?

Related Hardware (view all EZB hardware)

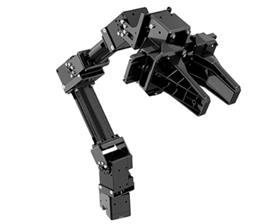

Six Hexapod

by EZ-Robot

Six hexapod robot kit: Canadian-designed, customizable WiFi 6-legged platform with 12 servos/12 DOF for dynamic motion. Available at EZ-Robot.

Wi-Fi / USB

Servos

24

Camera

Audio

UART

3

I2C

ADC

8

Digital

24

Related Robot Skill (view all robot skills)

Cognitive Emotion

by Microsoft

Uses Microsoft Cognitive Emotion cloud to analyze camera images, returning emotion descriptions and confidence for speech/output (requires internet).

cognitive emotion step1 -Start the camera step 2 - start cognitive emotion step3 - step 3 - add this to the cognitive vision EZ -script: next to the blocky

for PC

Say(("I see " + $EmotionDescription))

or

for Ez robots

SayEZB(("I see " + $EmotionDescription))

step1 -Start the camera

step 2 - start cognitive vision

step 3 - add this to the cognitive vision EZ -script: next to the blocky

(for PC)

Say(("I see " + $VisionDescription))

for Ez robots

SayEZB(("I see " + $VisionDescription))

In the Camera click on object, "train new object", have it record your face, name the object (your name)

save

thats's it

"I think you are feeling" is a very strong statement for a robot though!!!

Lol Mickey - the OP stumbled across sentience

Thank you so much EZang60! I will try that. My apologies for slow thanks, I have a lot to do in preparation for college graduation. I appreciate your time and help. ~Blessings

Ok, that should work, I tried it myself, if not let me know, good luck with your college graduation. What are your current goals?

Hi EZang60, The Cognitive Vision works great! However the Emotion Description does nothing at all. Is there some code besides the SayEZB(("I see " + $EmotionDescription)) I should be adding to the EzScript in the Cognitive Emotion code? It keeps giving error message no face recognition? In Cognitive Vision it gives description of what I look like including the fact that I wear eyeglasses, so all good there, but the emotion description is what I really want. Here's what I would like it to do, when a random face appears in the camera, tell what emotion the person is showing : Ex: angry, happy, sad etc. If the random person asks the robot how they feel, it will say the emotion. Hope I have explained it clear enough for you. I graduate in 2020 but shoo they got lots of lit tasks to complete prior to graduation lol. I plan to be Digital Forensics expert ... :-) But I am finding working with robots both challenging and interesting lol. So we shall see

Put in the cognitive emotion - SayEZB(("I see " + $EmotionDescription)) for your robot

make sure the variables are:

detected emotion = $EmotionDescription confidence = $EmotionConfidence

start the camera, then In the camera tracking - click "face"

correct, put your face in front of the camera, lol - assume nothing

Digital Forensics expert, sounds good - working with robots is the now and the future

You must have your face in front of the camera for the control to recognize and detect your emotion. The control cannot detect emotion if there is no human face on the camera image. Also, ensure you are close enough to the camera to have your face in it.

Here's a good tutorial to get started by EZ-Robot. There's a lot of great tutorials on their website that I highly recommend. Follow this link: https://synthiam.com/Community/Tutorials/92