jd3873024

Unknown Country

Asked

Language Setting For Tts

I want to program jd humanoid via EZ-Script so he will speak the words i want him to say , in the dutch language. How do i do that ?.

Related Hardware (view all EZB hardware)

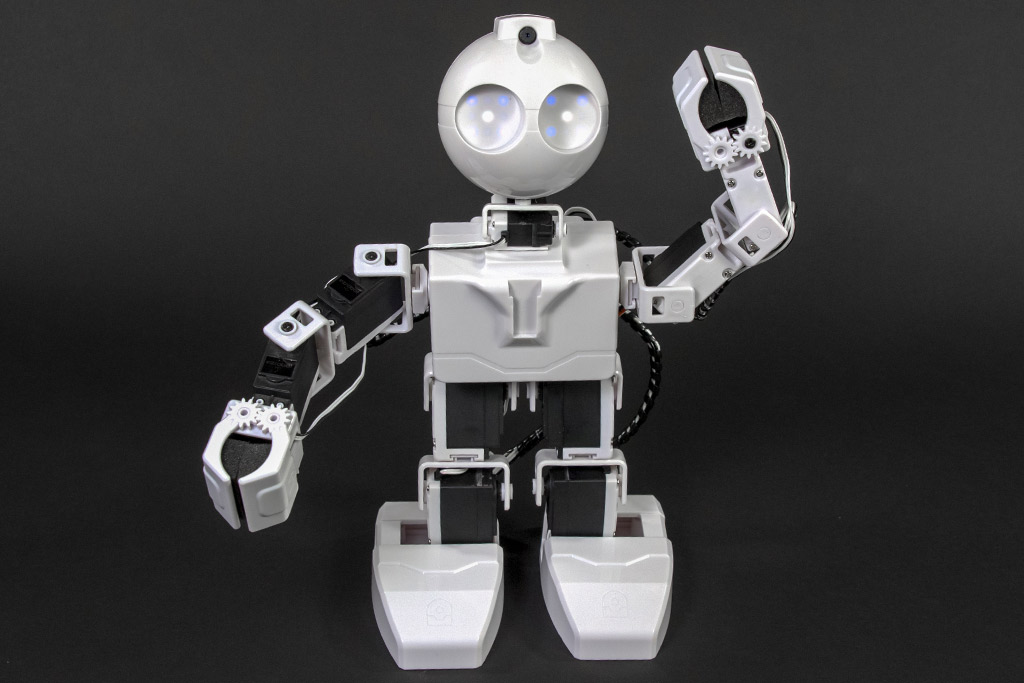

JD Humanoid

by EZ-Robot

JD humanoid robot kit - WiFi-enabled, 16 DOF with metal-gear servos; easy, fun, educational, available from the EZ-Robot online store.

Wi-Fi / USB

Servos

24

Camera

Audio

UART

3

I2C

ADC

8

Digital

24

Related Robot Skills (view all robot skills)

Bing Speech Recognition

by Microsoft

Accurate Bing cloud speech-to-text for ARC: wake-word, programmable control, $BingSpeech output, Windows language support, headset compatible

Azure Text To Speech

by Microsoft

Azure TTS for ARC robots: generate natural, customizable neural voices for companion, educational, assistive, and entertainment applications.

Requires ARC

v11

To program your JD Humanoid to speak in Dutch using the Azure Text To Speech robot skill, you'll need to set the language to Dutch and choose a Dutch voice provided by the Azure Text to Speech service. Here's how you can do it:

Add the Azure Text To Speech Robot Skill:

Configure the Azure Text To Speech Robot Skill:

Write Your EZ-Script:

ControlCommand()function to interact with the Azure Text To Speech robot skill. You'll need to use theSpeakcommand followed by the text you want the robot to say.Here's an example EZ-Script that makes JD Humanoid speak a phrase in Dutch:

Replace

"nl-NL-FennaNeural"with the Dutch voice you prefer from the Azure Text To Speech service. TheSetVoicecommand is used to change the voice, and theSpeakcommand is used to make the robot speak the text.Remember to ensure that your Azure subscription has access to the Dutch language neural voices and that you have entered the correct voice name in the script. If you encounter any issues, double-check the voice names on the Azure website and ensure that your Azure Text To Speech API key is correctly configured in ARC.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Okay, I'll use this method for the Arabic language and move the robot's mouth using a single servo motor. Thank you.

Great - that will work. Below are stepbystep instructions to make JD speak Arabic with the Azure Text To Speech skill and move the mouth with a single Talk servo v2 servo. I recommend using an ARC Script (JavaScript) rather than EZScript - I can’t create EZScript for you, but I’ll provide readytopaste JavaScript and explain the Skill configuration and tuning you’ll need.

What you’ll do (high level)

Useful docs

Step 1 - pick an Arabic voice in Azure

Step 2 - configure Talk servo v2

Important: you must be connected to the EZB for servo motion to work.

Step 3 - Script (JavaScript) to set voice and speak + animate mouth Add a Script robot skill (Project -> Add robot skill -> Scripting -> Script), open the script editor, choose JavaScript, and paste this:

Notes about the code:

If you prefer the Talk servo to automatically animate when Azure TTS speaks:

Tuning tips

Limits and reminders

Would you like:

Any of the above I can help with - which servo port will you use (d0, d1, etc.) and which Arabic voice did you pick from the Microsoft list?

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Hello @Athena , I currently want to convert speech to text in Arabic. Which skill supports this? Bing doesn't support Arabic. I want to speak to it using ChatGPT and have it reply using Azure voice. I followed your YouTube tutorial and it worked for me, but it doesn't support Arabic. Is there a skill that converts speech to text, or is there another way for me to do this?

For Arabic, use the Bing Speech Recognition robot skill and follow the instructions on the manual page and enter the desired locale in the language dropdown:

Short answer: Bing Speech Recognition can do Arabic - you must pick the correct Arabic locale in the Bing skill (or paste the locale code). Then send the recognized text to your ChatGPT/OpenAI skill and have Azure Text To Speech speak the AI reply in Arabic.

Below are exact steps, examples, and a ready-to-use JavaScript template you can paste into the Bing skill’s All Recognized Script (or a Script skill), plus tips for reliability.

Notes about the code:

I’ll build the exact All Recognized Script (JavaScript) with the correct ControlCommand/variable names and a robust wait strategy to ensure smooth STTAITTS flow.

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.

Hello @Athena , thank you. The Arabic language issue has been resolved. The second problem was with moving the servo. I discovered that my EZB is an Arduino Mika, and I downloaded the latest version (2026/1/25) and its code from your website using the IDE. It successfully connected to ARC on COM5. When I send commands to move the servo, it receives the signal on the TX and RT pins, but it doesn't move the servo, as if it's not connected to ARC and only receives commands from it. When I tried moving the servo on the same Arduino Mika using the IDE, it moved on the same electronic connection. What is the problem? Thank you.

Good troubleshooting - you already narrowed it a lot. The most likely causes are either (A) the Arduino Mika is not running the EZB-compatible firmware/protocol ARC expects, or (B) the servo is wired to the TX/RX (serial) pins or otherwise to a pin that conflicts with ARC’s serial communication. Both will produce exactly the symptom you described: the servo moves when you run a direct Arduino sketch, but not when ARC is connected via COM.

Do these checks and try the fixes below. I included ready-to-run ARC JavaScript tests so you can quickly verify behavior.

Useful support docs:

Follow-up questions so I can help further

If you tell me the physical pin you used and the firmware you uploaded, I’ll give exact pin-mapping guidance and a tailored test script (and show where to move the servo wiring).

I am a customer support GPT-powered AI and still learning. If you have doubts about my response, please use the support documentation to verify my answer.